If 2022 marked the moment when generative AI’s disruptive potential first captured wide public attention, 2024 has been the year when questions about the legality of its underlying data have taken center stage for businesses eager to harness its power.

The USA’s fair use doctrine, along with the implicit scholarly license that had long allowed academic and commercial research sectors to explore generative AI, became increasingly untenable as mounting evidence of plagiarism surfaced. Subsequently, the US has, for the moment, disallowed AI-generated content from being copyrighted.

These matters are far from settled, and far from being imminently resolved; in 2023, due in part to growing media and public concern about the legal status of AI-generated output, the US Copyright Office launched a years-long investigation into this aspect of generative AI, publishing the first segment (concerning digital replicas) in July of 2024.

In the meantime, business interests remain frustrated by the possibility that the expensive models they wish to exploit could expose them to legal ramifications when definitive legislation and definitions eventually emerge.

The expensive short-term solution has been to legitimize generative models by training them on data that companies have a right to exploit. Adobe’s text-to-image (and now text-to-video) Firefly architecture is powered primarily by its purchase of the Fotolia stock image dataset in 2014, supplemented by the use of copyright-expired public domain data*. At the same time, incumbent stock photo suppliers such as Getty and Shutterstock have capitalized on the new value of their licensed data, with a growing number of deals to license content or else develop their own IP-compliant GenAI systems.

Synthetic Solutions

Since removing copyrighted data from the trained latent space of an AI model is fraught with problems, mistakes in this area could potentially be very costly for companies experimenting with consumer and business solutions that use machine learning.

An alternative, and much cheaper solution for computer vision systems (and also Large Language Models, or LLMs), is the use of synthetic data, where the dataset is composed of randomly-generated examples of the target domain (such as faces, cats, churches, or even a more generalized dataset).

Sites such as thispersondoesnotexist.com long ago popularized the idea that authentic-looking photos of ‘non-real’ people could be synthesized (in that particular case, through Generative Adversarial Networks, or GANs) without bearing any relation to people that actually exist in the real world.

Therefore, if you train a facial recognition system or a generative system on such abstract and non-real examples, you can in theory obtain a photorealistic standard of productivity for an AI model without needing to consider whether the data is legally usable.

Balancing Act

The problem is that the systems which produce synthetic data are themselves trained on real data. If traces of that data bleed through into the synthetic data, this potentially provides evidence that restricted or otherwise unauthorized material has been exploited for monetary gain.

To avoid this, and in order to produce truly ‘random’ imagery, such models need to ensure that they are well-generalized. Generalization is the measure of a trained AI model’s capability to intrinsically understand high-level concepts (such as ‘face’, ‘man’, or ‘woman’) without resorting to replicating the actual training data.

Unfortunately, it can be difficult for trained systems to produce (or recognize) granular detail unless it trains quite extensively on a dataset. This exposes the system to risk of memorization: a tendency to reproduce, to some extent, examples of the actual training data.

This can be mitigated by setting a more relaxed learning rate, or by ending training at a stage where the core concepts are still ductile and not associated with any specific data point (such as a specific image of a person, in the case of a face dataset).

However, both of these remedies are likely to lead to models with less fine-grained detail, since the system did not get a chance to progress beyond the ‘basics’ of the target domain, and down to the specifics.

Therefore, in the scientific literature, very high learning rates and comprehensive training schedules are generally applied. While researchers usually attempt to compromise between broad applicability and granularity in the final model, even slightly ‘memorized’ systems can often misrepresent themselves as well-generalized – even in initial tests.

Face Reveal

This brings us to an interesting new paper from Switzerland, which claims to be the first to demonstrate that the original, real images that power synthetic data can be recovered from generated images that should, in theory, be entirely random:

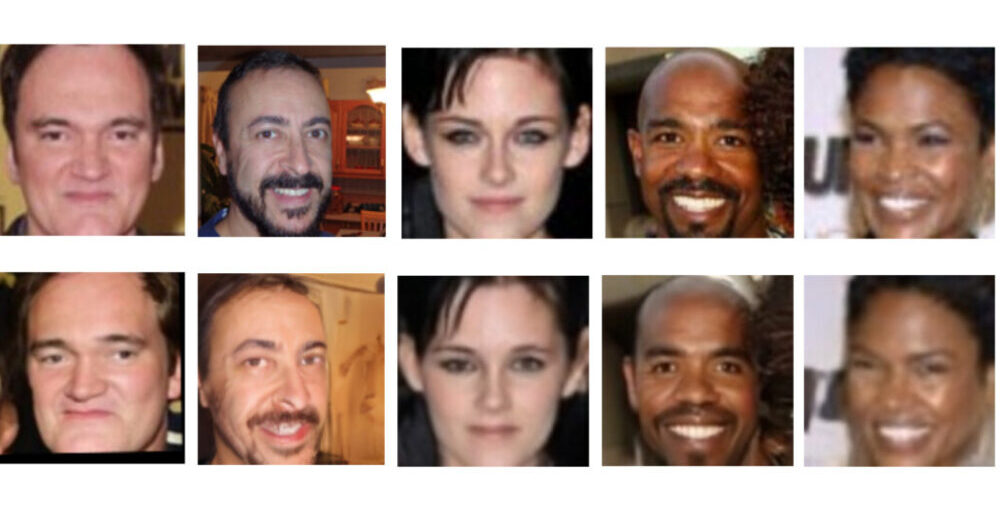

Example face images leaked from training data. In the row above, we see the original (real) images; in the row below, we see images generated at random, which accord significantly with the real images. Source: https://arxiv.org/pdf/2410.24015

The results, the authors argue, indicate that ‘synthetic’ generators have indeed memorized a great many of the training data points, in their search for greater granularity. They also indicate that systems which rely on synthetic data to shield AI producers from legal consequences could be very unreliable in this regard.

The researchers conducted an extensive study on six state-of-the-art synthetic datasets, demonstrating that in all cases, original (potentially copyrighted or protected) data can be recovered. They comment:

‘Our experiments demonstrate that state-of-the-art synthetic face recognition datasets contain samples that are very close to samples in the training data of their generator models. In some cases the synthetic samples contain small changes to the original image, however, we can also observe in some cases the generated sample contains more variation (e.g., different pose, light condition, etc.) while the identity is preserved.

‘This suggests that the generator models are learning and memorizing the identity-related information from the training data and may generate similar identities. This creates critical concerns regarding the application of synthetic data in privacy-sensitive tasks, such as biometrics and face recognition.’

The paper is titled Unveiling Synthetic Faces: How Synthetic Datasets Can Expose Real Identities, and comes from two researchers across the Idiap Research Institute at Martigny, the École Polytechnique Fédérale de Lausanne (EPFL), and the Université de Lausanne (UNIL) at Lausanne.

Method, Data and Results

The memorized faces in the study were revealed by Membership Inference Attack. Though the concept sounds complicated, it is fairly self-explanatory: inferring membership, in this case, refers to the process of questioning a system until it reveals data that either matches the data you are looking for, or significantly resembles it.

Further examples of inferred data sources, from the study. In this case, the source synthetic images are from the DCFace dataset.

The researchers studied six synthetic datasets for which the (real) dataset source was known. Since both the real and the fake datasets in question all contain a very high volume of images, this is effectively like looking for a needle in a haystack.

Therefore the authors used an off-the-shelf facial recognition model† with a ResNet100 backbone trained on the AdaFace loss function (on the WebFace12M dataset).

The six synthetic datasets used were: DCFace (a latent diffusion model); IDiff-Face (Uniform – a diffusion model based on FFHQ); IDiff-Face (Two-stage – a variant using a different sampling method); GANDiffFace (based on Generative Adversarial Networks and Diffusion models, using StyleGAN3 to generate initial identities, and then DreamBooth to create varied examples); IDNet (a GAN method, based on StyleGAN-ADA); and SFace (an identity-protecting framework).

Since GANDiffFace uses both GAN and diffusion methods, it was compared to the training dataset of StyleGAN – the nearest to a ‘real-face’ origin that this network provides.

The authors excluded synthetic datasets that use CGI rather than AI methods, and in evaluating results discounted matches for children, due to distributional anomalies in this regard, as well as non-face images (which can frequently occur in face datasets, where web-scraping systems produce false positives for objects or artefacts that have face-like qualities).

Cosine similarity was calculated for all the retrieved pairs, and concatenated into histograms, illustrated below:

A Histogram representation for cosine similarity scores calculated across the diverse datasets, together with their related values of similarity for the top-k pairs (dashed vertical lines).

The number of similarities is represented in the spikes in the graph above. The paper also features sample comparisons from the six datasets, and their corresponding estimated images in the original (real) datasets, of which some selections are featured below:

Samples from the many instances reproduced in the source paper, to which the reader is referred for a more comprehensive selection.

The paper comments:

‘[The] generated synthetic datasets contain very similar images from the training set of their generator model, which raises concerns regarding the generation of such identities.’

The authors note that for this particular approach, scaling up to higher-volume datasets is likely to be inefficient, as the necessary computation would be extremely burdensome. They observe further that visual comparison was necessary to infer matches, and that the automated facial recognition alone would not likely be sufficient for a larger task.

Regarding the implications of the research, and with a view to roads forward, the work states:

‘[We] would like to highlight that the main motivation for generating synthetic datasets is to address privacy concerns in using large-scale web-crawled face datasets.

‘Therefore, the leakage of any sensitive information (such as identities of real images in the training data) in the synthetic dataset spikes critical concerns regarding the application of synthetic data for privacy-sensitive tasks, such as biometrics. Our study sheds light on the privacy pitfalls in the generation of synthetic face recognition datasets and paves the way for future studies toward generating responsible synthetic face datasets.’

Though the authors promise a code release for this work at the project page, there is no current repository link.

Conclusion

Lately, media attention has emphasized the diminishing returns obtained by training AI models on AI-generated data.

The new Swiss research, however, brings to the focus a consideration that may be more pressing for the growing number of companies that wish to leverage and profit from generative AI – the persistence of IP-protected or unauthorized data patterns, even in datasets that are designed to combat this practice. If we had to give it a definition, in this case it might be called ‘face-washing’.

* However, Adobe’s decision to allow user-uploaded AI-generated images to Adobe Stock has effectively undermined the legal ‘purity’ of this data. Bloomberg contended in April of 2024 that user-supplied images from the MidJourney generative AI system had been incorporated into Firefly’s capabilities.

† This model is not identified in the paper.

First published Wednesday, November 6, 2024